# Reinforcement Learning Notes: State and Action Values

# 1. State Value / State Value Function

vπ(s)=E[Gt∣St=s]

- The state value function vπ(s) represents the expected discounted return when starting in state s and following policy π.

- Core Concept: Discounted return average upon a given policy.

- Deterministic Scenario: If the policy π(a∣s), transition probability p(s′∣s,a), and reward p(r∣s,a) are all deterministic, the State Value is identical to the Return.

# 2. Bellman Equation

The Bellman equation characterizes the relationship among the state-value functions of different states.

vπ(s)=a∑π(a∣s)[r∑p(r∣s,a)r+γs′∑p(s′∣s,a)vπ(s′)],∀s∈S

- π(a∣s) is a given policy, which assigned a probability for action a at state s.

- Solving the Bellman equation is called Policy Evaluation.

vπ=rπ+γPπvπ

- vπ=[vπ(s1),…,vπ(sn)]T is the value function at all states.

- rπ=[rπ(s1),…,rπ(sn)]T (Immediate reward under policy π)

- Calculation: rπ(s)=∑aπ(a∣s)∑rp(r∣s,a)r

- Pπ: State transition matrix under policy π.

# 3. Solving State Values (for a certain policy)

State values can be obtained through:

- Direct Solution: vπ=(I−γPπ)−1rπ

- Iterative Solution: vk+1=rπ+γPπvk, where subscript k represents iterate step.

# 4. Action Value

The action value function qπ(s,a) is the expected return starting from state s, taking action a, and following policy π.

qπ(s,a)=E[Gt∣St=s,At=a]

vπ(s)=a∑π(a∣s)qπ(s,a)

qπ(s,a)=r∑p(r∣s,a)r+γs′∑p(s′∣s,a)vπ(s′)

- Compare different actions and select the one with the maximum value (Greedy selection).

# Optimal Policy and Iterative Algorithms

# 1. Bellman Optimal Equation

An optimal policy π∗ is optimal if vπ∗(s)≥vπ(s) for all s and for any other policy π.

For all s∈S:

v(s)=πmaxa∑π(a∣s)[r∑p(r∣s,a)r+γs′∑p(s′∣s,a)v(s′)]=πmaxa∑π(a∣s)q(s,a)

- Given probability or system model: p(r∣s,a),p(s′∣s,a)

- Reward r

- Gamma γ

v=πmax(rπ+γPπv)

The optimal solution is a deterministic greedy policy:

- π∗(a∣s)=1 if a=a∗, else 0.

- a∗=argmaxaq(s,a).

f(v):=πmax(rπ+γPπv)

v=f(v)

where

[f(v)]s=πmaxa∑π(a∣s)q(s,a),s∈S

# 2. Contraction Mapping Theorem

A fixed-point problem: v=f(v).

- Theorem: If f is a contraction mapping

∣∣f(x1)−f(x2)∣∣≤γ∣∣x1−x2∣∣, where γ∈(0,1)

, then a unique fixed point x∗ exists.

- Algorithm: The sequence xk+1=f(xk) converges to x∗, when k→∞.

- Application: f(v)=v in RL is a contraction mapping fix point problem.

# 3. Value Iteration (VI) Algorithm

vk+1=f(vk)=πmax(rπ+γPπvk)

- Step 1 Policy update: πk+1=argmaxπ(rπ+γPπvk). Use current vk select action that maximize qk(s,a)

- Step 2: vk+1=rπk+1+γPπk+1vk.

- Note: vk is not the true state value of the intermediate policy. Since vk does not satisfy Bellman equation.

- Step 3: Stop iteration when vk changes below the threshold.

q-table explicit expression of q(s,a), list all states and actions on a matrix.

# 4. Policy Iteration (PI) Algorithm

- Step 0: Initial random π0

- Step 1: Policy Evaluation (PE): Solve

vπk=rπk+γPπkvπk.

Closed form: vπk=(I−γPπk)−1rπk

Iterative: v(j+1)=r+γPv(j)

- Step 2: Policy Improvement (PI): πk+1=argmaxπ(rπ+γPπvπk).

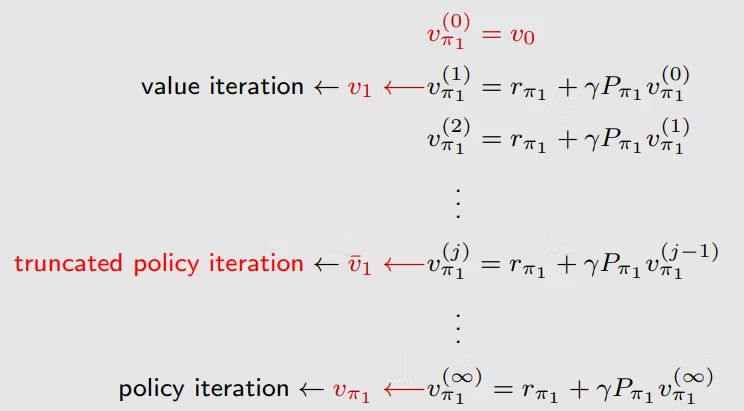

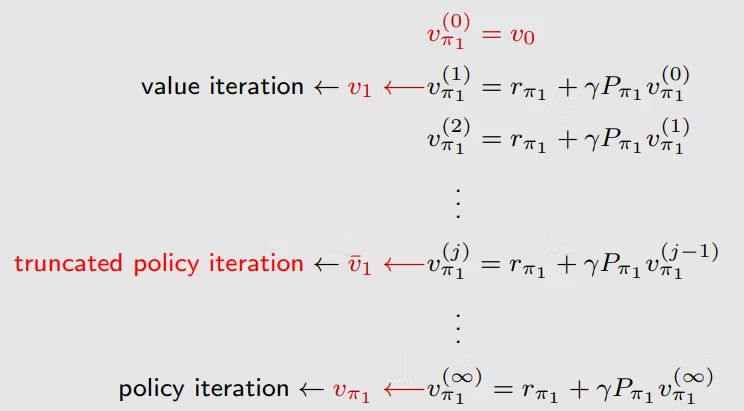

# 5. Comparison: VI and PI

When solving for the value function:

- Value Iteration: Only 1 step of evaluation (j=1) before updating π.

- Truncated PI: j steps of evaluation.

- Policy Iteration: Full evaluation (j→∞) before updating π.